This is why we speak of "projected texture stereo vision". This ensures a dense 3D point cloud even on unicolored or ambiguously textured surfaces. During the image capture the texture projection unit augments the object’s own texture with a highly structured pattern to eliminate ambigui-ties in the stereo matching step. Texture projectionĮnsenso stereo cameras therefore integrates an additional texture projection unit. Ensenso cameras use special techniques to improve the classic Stereo Vision process, which results in a higher quality of depth information and more precise measurement results. Because texture perception is directly dependent on lighting conditions and the surface texture of objects in the scene, poorly textured or reflective surfaces have a direct impact on the quality of the resulting 3D point cloud. The surface is al-so textured with the left camera image (here converted to gray scale).Īs mentioned earlier, all stereo matching techniques require textured objects to reliably determine the correspondences between the left and right image. A visualization of the point cloud is shown in Figure 5.įigure 5: View of the 3D Surface generated from the disparity map and the camera calibration data. It is often stored as a three channel image to also keep the point’s neighboring information from the image’s pixel grid. The resulting XYZ data is called a point cloud. We can simply intersect the two rays of each associated left and right image pixel, as illustrated earlier in Figure 1. We can then again use the camera geometry obtained during calibration to convert the pixel based disparity values into actual metric X, Y and Z coordinates for every pixel. Figure 4 illustrates the dispari-ty notion.

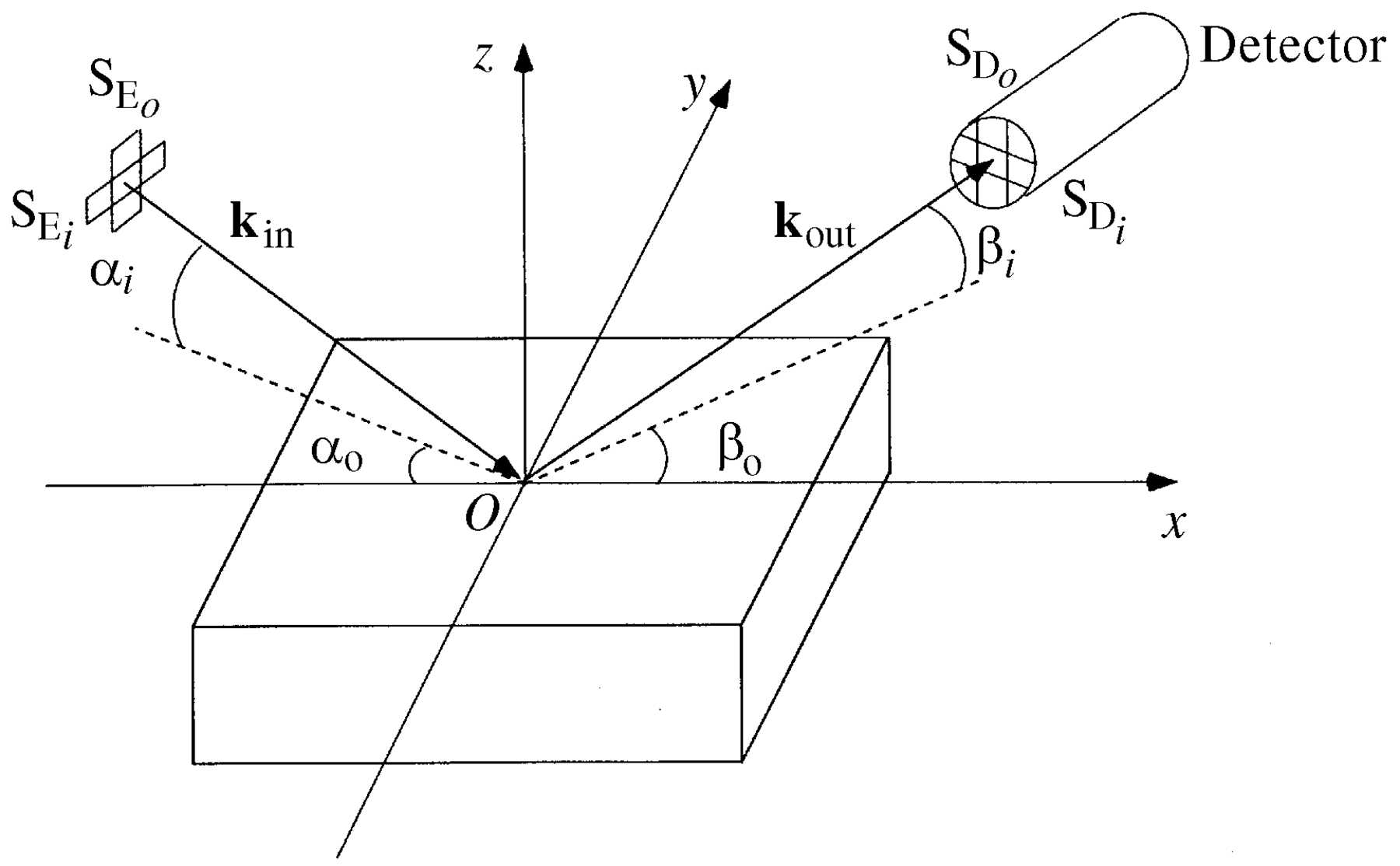

The values in the disparity map encode the offset in pixels, where the corre-sponding location was found in the right image. Regardless of what matching technique is used, the result is always an asso-ciation between pixels of the left and right image, stored in the disparity map. This will happen for occluded areas or reflections on the object, which appears differently in both cameras. A special value (here black) is used to indicate, that a pixel could not be identified in the right image. The disparity map represents depth information in form of pixel shifts between left and right image. Global methods are more complex and need more processing power than the local approach, but they require less texture on the surfaces and deliver more accurate results, especially at object boundaries.įigure 4: Result of image matching. This global assigment also takes into account that surfaces are mostly smooth and thus neighboring pixels will often have similar depths. They don’t just consider each pixel (or im-age patch) individually to search for a matching partner, instead they try to find an assignment for all left and right image pixels at once. Other methods, known as global stereo matching, can al-so exploit neighboring information. Thus, local stereo matching will fail in regions with poor or repetitive texture. Obviously, we can only match a region be-tween left and right image when it is sufficiently distinct from other image parts on the same scanline. This matching technique is called local stereo matching, as it only uses local information around each pixel. Correspondence search can be carried out along image scanlines. Bottom: Rectification makes epipolar lines aligned with the image axes. Middle: Removing image distortions results in straight epipolar lines. Top: The epipolar lines are curved in the distorted raw images. We can use this calibration data to triangulate corresponding points that have been identified in both images and recover their metric 3D coordinates with respect to the camera.įigure 2: Search space to match image locations is only one dimensional. the rotation and shift in three dimensions be-tween the left and right camera. The model consists of the so-called in-trinsic parameters of each camera like the camera’s focal length and distortion and the extrinsic parameters, i.e. One can then use the pixel locations of the pattern’s dots in each image pair and their known positions on the cali-bration plate to compute both, the 3D poses of all observed patterns, and an accurate model of the stereo camera. Then we capture synchronous image pairs, showing the pattern different positions, ori-entations and distances in both cameras. Usually this is a planar calibration plate with a checkerboard or dot pattern of known size. The geometry of the two-camera system is computed a priori in the stereo cal-ibration process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed